Introduction:

One of the great things about my role at Uila is that I get to work directly with customers and see firsthand how they use our software in their environment and how it performs to solve their real world, everyday issues. While demos, webinars, and whitepapers are an excellent source of valuable product information for customers, the best way to prove product value and maintain a feedback loop to our software designers, is to become a detective of sorts, observing and understanding how the software runs once installed in the customer’s data center.

In this post, and more to come, this Root Cause Detective will share as many details and screenshots as possible (removing identifiable information like IP addresses and machine names, of course!). That said, please read on...

IT Root Cause Detective - Case File #2: Objectivity

In the first Root Cause Detective post, I reviewed the case of a slow ERP application at Carolina Biological and now I am continuing the series with an investigation into a performance issue with the software development environment at Objectivity, Inc. As always, I’ve used actual screenshots, but have masked any confidential information.

Case File #2: Objectivity, Inc.

Objectivity has been providing “big data” analytics software for mission critical applications since 1981, long before anyone in the industry began using the term (if you are curious about history like I am, the first references appeared in the 1990s, and it got its own Wikipedia entry in 2010). To put that in context, in 1981, state of the art business computing looked like this:

Source: HP Computer Museum

Today, they use modern development frameworks to provide graph analytics and a database platform used by Global 1000 companies and rely heavily on virtualization to give the development and QA teams the flexibility they need to collaborate. They have about 15 hosts and 125 VMs supporting the engineering team.

Call the Detective

Normally, you would expect virtualization to speed up processes like software testing. It enables organizations like Objectivity to get their build times down to 3 hours or less. But the QA regression testing at Objectivity would often take 12 to 24 hours – which is far too long to keep the team productive. Also, their project collaboration server, part of their integrated continuous integration and continuous development process, would experience random slow downs.

The in-house standard network and infrastructure monitoring tools were not giving the IT teams clear visibility into the issues. The host and VMs appeared healthy, but something was clearly wrong. Engineering productivity was down, and Objectivity needed answers. They needed a solution that could identify bottlenecks in their engineering production system. Objectivity was introduced to Uila through our reseller, Axcelerate Networks.

Observations & Resolution

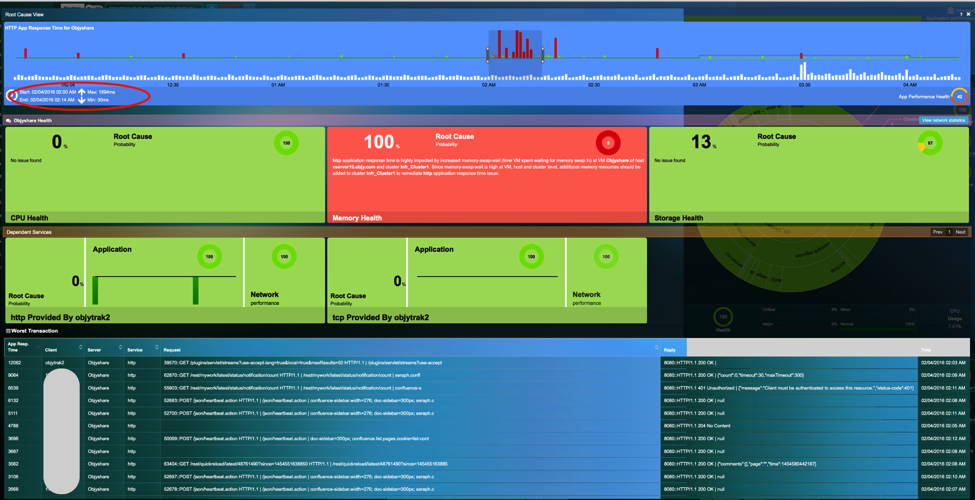

After I helped them get Uila up and running, we spotted a pattern right away. The overall QA test system performance would drop daily, typically around the same time.

Digging in further, we found a 600ms response time on a build server. Since completing a build requires sending a steady stream of sequential data, it was no wonder some regression tests were taking up to a day to complete!

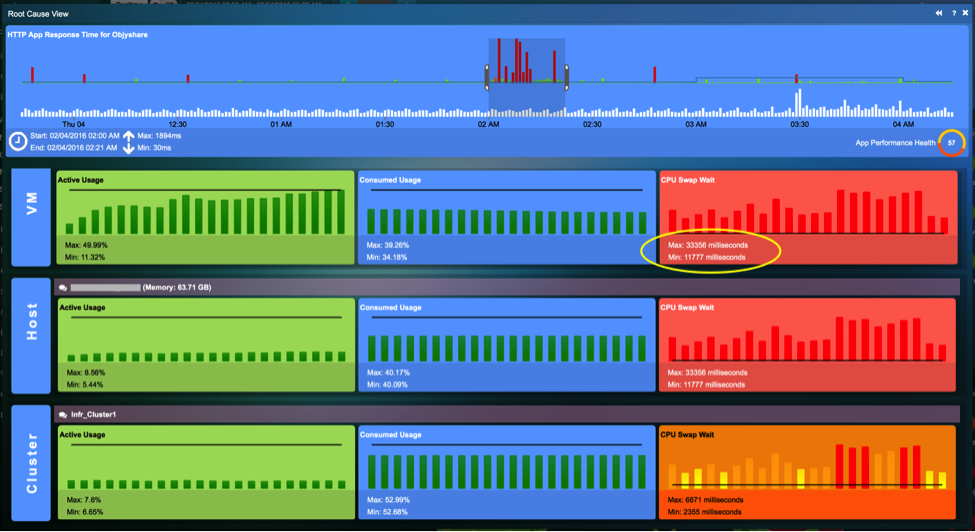

We continued digging in, and the Uila root cause dashboard identified potential memory constraints on some of the VMs. The Objectivity IT team had checked the memory utilization from vCenter and other tools previously, but the average usage across the host was 40 percent. Something else was going on that needed to be quickly identified.

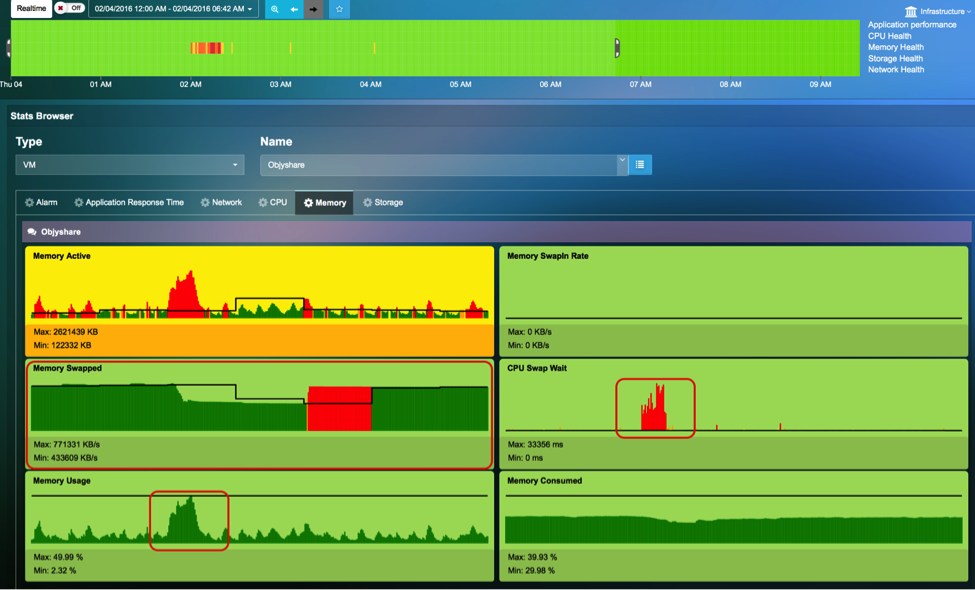

With Uila, we were able to look at memory, percent ready, swap wait times and other metrics, and then correlate that with the performance issue. It turned out that some VMs were seeing swap wait times of up to 33,000 milliseconds, or 33 seconds. The VMs were also pegged at 100 percent memory utilization and were swapping to disk constantly. No application (or user) will work well under those conditions.

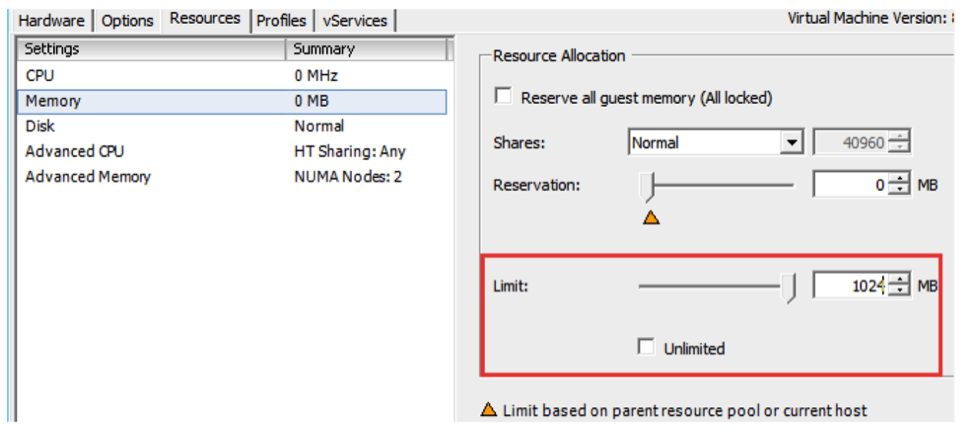

What happened? It turns out when you clone a VM, any memory and CPU limits you have set are carried over. Some of the VMs had been cloned from non-production instances, which had been configured to use a limited amount of memory. Although the host had plenty of memory available, VMs were prevented from accessing it even when they were swapping to disk.

The solution ended up being simple. VMware had published a KB on the problem, and once we identified that, Objectivity was able to configure appropriate memory limits.

After a quick adjustment in vCenter (as shown above), the regression test times dropped back down to acceptable levels of about 3 to 5 hours.

Roger’s Case #2 - Root Cause Detective Report:

With Uila Application-Centric Infrastructure Monitoring software running 24x7 in Objectivity data center to prevent performance issues from impacting engineering productivity, we anticipate they'll continue the innovation and success for their customers. Wee-luh (Uila) looks forward to being a part of this.

# # #

If you are interested in working with Uila's "Root Cause Detective" to analyze your data center performance issues - please ask. If we can't resolve it, you may be entitled to a $50 Amazon gift card for 'stumping the detective'

Subscribe

Latest Posts

- How Data Center System Administrators Are Evolving in today's world

- Microsoft NTLM: Tips for Discontinuation

- Understanding the Importance of Deep Packet Inspection in Application Dependency Mapping

- Polyfill.io supply chain attack: Detection & Protection

- Importance of Remote End-User Experience Monitoring

- Application and Infrastructure Challenges for Utility Companies

- Troubleshooting Exchange Server Issues in Data Centers

- Importance of Application Dependency Mapping for IT Asset Inventory Control

- Navigating the Flow: Understanding East-West Network Traffic

- The imperative of full-stack observability